【PyTorch】深度学习入门笔记(十三)搭建小实战和Sequential的使用

我是 雪天鱼,一名FPGA爱好者,研究方向是FPGA架构探索和数字IC设计。

关注公众号【集成电路设计教程】,获取更多学习资料,并拉你进“IC设计交流群”。

QQIC设计&FPGA&DL交流群 群号:866169462。

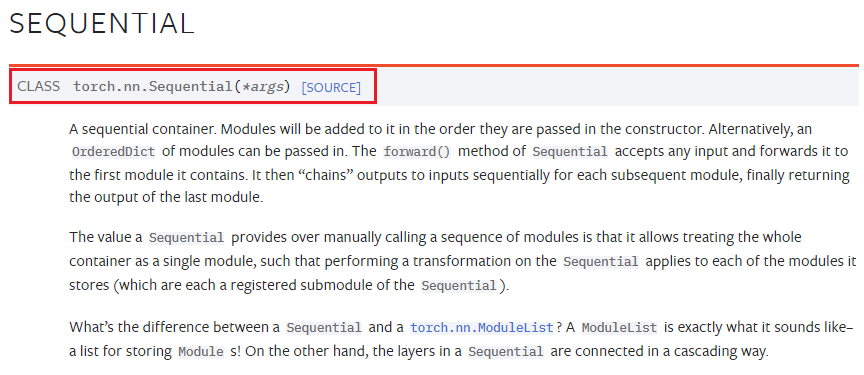

一、Sequential 简介

官网介绍:https://pytorch.org/docs/stable/generated/torch.nn.Sequential.html?highlight=sequential#torch.nn.Sequential

它的作用是一个序列容器,把各种层按照网络模型的顺序进行放置,简化代码的编写。

Example:

# Using Sequential to create a small model. When `model` is run,

# input will first be passed to `Conv2d(1,20,5)`. The output of

# `Conv2d(1,20,5)` will be used as the input to the first

# `ReLU`; the output of the first `ReLU` will become the input

# for `Conv2d(20,64,5)`. Finally, the output of

# `Conv2d(20,64,5)` will be used as input to the second `ReLU`

model = nn.Sequential(nn.Conv2d(1,20,5),nn.ReLU(),nn.Conv2d(20,64,5),nn.ReLU())

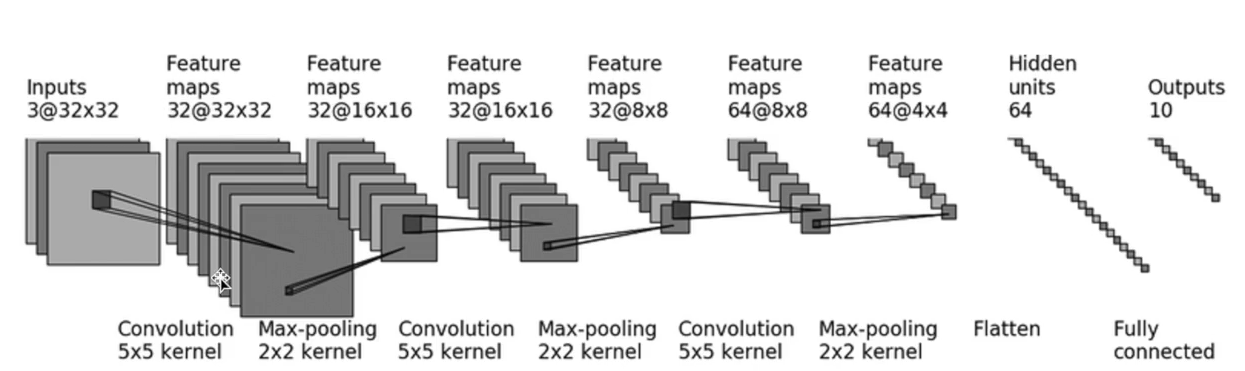

二、小实战-CIFAR10网络模型搭建

目标模型:

卷积 -> 最大池化 -> 卷积 -> 最大池化 -> 卷积 -> 最大池化 -> 展平 -> 全连接

- 最大池化不改变 channel 数量

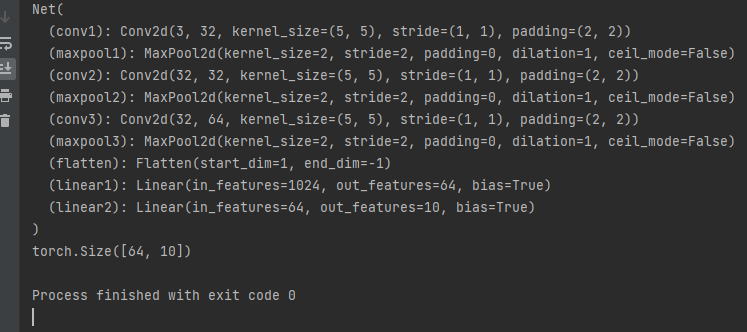

三、未使用 sequential 时网络代码

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linearclass Net(nn.Module):def __init__(self):super(Net, self).__init__()self.conv1 = Conv2d(3, 32, 5, padding=2)self.maxpool1 = MaxPool2d(2)self.conv2 = Conv2d(32, 32, 5, padding=2)self.maxpool2 = MaxPool2d(2)self.conv3 = Conv2d(32, 64, 5, padding=2)self.maxpool3 = MaxPool2d(2)self.flatten = Flatten()self.linear1 = Linear(1024, 64)self.linear2 = Linear(64, 10)def forward(self, x):x = self.conv1(x)x = self.maxpool1(x)x = self.conv2(x)x = self.maxpool2(x)x = self.conv3(x)x = self.maxpool3(x)x = self.flatten(x)x = self.linear1(x)x = self.linear2(x)return xnet = Net()

print(net)input = torch.ones((64, 3, 32, 32))

output = net(input)

print(output.shape)

输出:

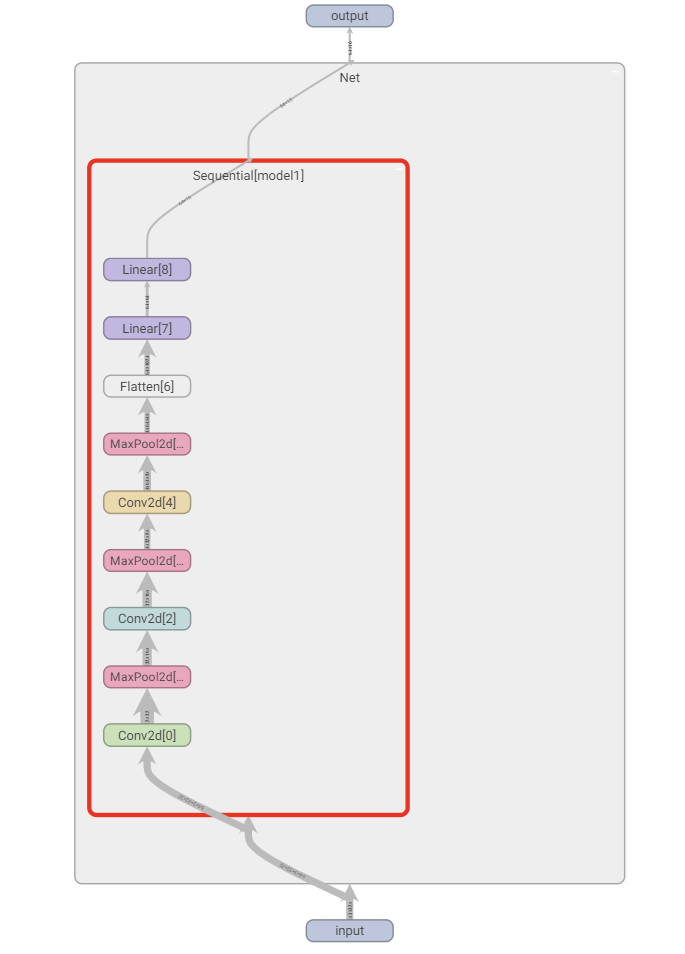

四、使用了 Sequential 后的网络代码

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.tensorboard import SummaryWriterclass Net(nn.Module):def __init__(self):super(Net, self).__init__()self.model1 = Sequential(Conv2d(3, 32, 5, padding=2),MaxPool2d(2),Conv2d(32, 32, 5, padding=2),MaxPool2d(2),Conv2d(32, 64, 5, padding=2),MaxPool2d(2),Flatten(),Linear(1024, 64),Linear(64, 10))def forward(self, x):x = self.model1(x)return xnet = Net()

print(net)input = torch.ones((64, 3, 32, 32))

output = net(input)

print(output.shape)writer = SummaryWriter("./logs_seq")

writer.add_graph(net, input)

writer.close()

可以看到代码简洁了一点。

使用 tensorboard进行查看:

本文来自互联网用户投稿,文章观点仅代表作者本人,不代表本站立场,不承担相关法律责任。如若转载,请注明出处。 如若内容造成侵权/违法违规/事实不符,请点击【内容举报】进行投诉反馈!