ElasticSearch学习(五): 分词器

1、什么是Analysis

顾名思义,文本分析就是把全文本转换成一系列单词(term/token)的过程,也叫分词。

在 ES 中,Analysis 是通过分词器(Analyzer) 来实现的,可使用 ES 内置的分析器或者按需定制化分析器。

2、分词器的组成:

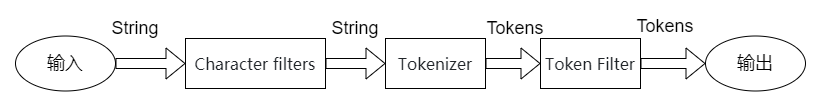

再简单了解了 Analysis 与 Analyzer 之后,让我们来看下分词器的组成:

分词器Analyzer是专门处理分词的组件,分词器由以下三部分组成:

- Character Filters:针对原始文本处理,比如去除 html 标签;

- Tokenizer:按照规则将文本切分为单词,比如按照空格切分;

- Token Filters:将切分的单词进行加工,比如大写转小写,删除 stopwords,增加同义语。

执行流程如下:

从图中可以看出,从左到右次经过 Character Filters,Tokenizer 以及 Token Filters,这个顺序比较好理解,一个文本进来肯定要先对文本数据进行处理,再去分词,最后对分词的结果进行过滤。

其中,ES 内置了许多分词器:

- Standard Analyzer - 默认分词器,按词切分,小写处理

- Simple Analyzer - 按照非字母切分(符号被过滤),小写处理

- Stop Analyzer - 小写处理,停用词过滤(the ,a,is)

- Whitespace Analyzer - 按照空格切分,不转小写

- Keyword Analyzer - 不分词,直接将输入当做输出

- Pattern Analyzer - 正则表达式,默认 \W+

- Language - 提供了 30 多种常见语言的分词器

- Customer Analyzer - 自定义分词器

3、Analyzer API

3.1、直接指定 Analyzer 进行测试

GET _analyze

{"analyzer": "standard","text" : "Mastering Elasticsearch , elasticsearch in Action"

}3.2、指定索引的字段进行测试

POST user/_analyze

{"field": "address","text": "北京市"

}3.3、自定义分词进行测试

POST _analyze

{"tokenizer": "standard","filter": ["lowercase"],"text": "Mastering Elasticesearch"

}4、ES分词器

4.1、Stamdard Analyzer

- 默认分词器

- 按词切分(基于词典)

- 切分后全部转换为小写

- 保留StopWords(停止词,如英文的in a the 等)

它是 ES 默认的分词器,它会对输入的文本按词的方式进行切分,切分好以后会进行转小写处理,默认的 stopwords 是关闭的。

stopwords说明:

在信息检索中,停用词是为节省存储空间和提高搜索效率,处理文本时自动过滤掉某些字或词,这些字或词即被称为Stop Words(停用词)。停用词大致分为两类:

一类是语言中的功能词,这些词极其普遍而无实际含义,比如“the”、“is“、“which“、“on”等。

另一类是词汇词,比如'want'等,这些词应用广泛,但搜索引擎无法保证能够给出真正相关的搜索结果,难以缩小搜索范围,还会降低搜索效率。实践中,通常把这些词从问题中过滤,从而节省索引的存储空间、提高搜索性能。

但是在实际语言环境中,停用词有时也有用的。比如,莎士比亚的名句:“To be or not to be.”所有的词都是停用词。特别当停用词和通配符(*)同时使用的时候,问题就来了:“the”、“is“、“on”还是停用词?

4.1.1、英文分词

GET _analyze

{"analyzer": "standard","text": "In 2022, Java is the best language in the world."

}

结果:

可以看出是按词切分的方式对输入的文本进行了转换,比如对 Java 做了转小写,对一些停用词也没有去掉,比如 in。

其中 token 为分词结果;start_offset 为起始偏移;end_offset 为结束偏移;position 为分词位置。

{"tokens" : [{"token" : "in","start_offset" : 0,"end_offset" : 2,"type" : "","position" : 0},{"token" : "2022","start_offset" : 3,"end_offset" : 7,"type" : "","position" : 1},{"token" : "java","start_offset" : 9,"end_offset" : 13,"type" : "","position" : 2},{"token" : "is","start_offset" : 14,"end_offset" : 16,"type" : "","position" : 3},{"token" : "the","start_offset" : 17,"end_offset" : 20,"type" : "","position" : 4},{"token" : "best","start_offset" : 21,"end_offset" : 25,"type" : "","position" : 5},{"token" : "language","start_offset" : 26,"end_offset" : 34,"type" : "","position" : 6},{"token" : "in","start_offset" : 35,"end_offset" : 37,"type" : "","position" : 7},{"token" : "the","start_offset" : 38,"end_offset" : 41,"type" : "","position" : 8},{"token" : "world","start_offset" : 42,"end_offset" : 47,"type" : "","position" : 9}]

}

4.1.2、中文分词

中文分词的效果,只是将中文语句分解为单个中文文字,没有词的概念,因此

Stamdard Analyzer分词器无法应对中文分词 。

GET _analyze

{"analyzer": "standard","text" : "北京市"

}分词结果:

{"tokens" : [{"token" : "北","start_offset" : 0,"end_offset" : 1,"type" : "","position" : 0},{"token" : "京","start_offset" : 1,"end_offset" : 2,"type" : "","position" : 1},{"token" : "市","start_offset" : 2,"end_offset" : 3,"type" : "","position" : 2}]

}

4.2、Simple Analyzer

- 使用非英文字母进行分词;

- 分词后,非英文字母被删除;

- 切分后全部转换为小写;

- 保留StopWords。

4.2.1、英文分词示例

GET _analyze

{"analyzer": "simple","text": "In 2022, Java is the best language in the world."

}

英文分词效果: (非英文字母被删除)

{"tokens" : [{"token" : "in","start_offset" : 0,"end_offset" : 2,"type" : "word","position" : 0},{"token" : "java","start_offset" : 9,"end_offset" : 13,"type" : "word","position" : 1},{"token" : "is","start_offset" : 14,"end_offset" : 16,"type" : "word","position" : 2},{"token" : "the","start_offset" : 17,"end_offset" : 20,"type" : "word","position" : 3},{"token" : "best","start_offset" : 21,"end_offset" : 25,"type" : "word","position" : 4},{"token" : "language","start_offset" : 26,"end_offset" : 34,"type" : "word","position" : 5},{"token" : "in","start_offset" : 35,"end_offset" : 37,"type" : "word","position" : 6},{"token" : "the","start_offset" : 38,"end_offset" : 41,"type" : "word","position" : 7},{"token" : "world","start_offset" : 42,"end_offset" : 47,"type" : "word","position" : 8}]

}

4.2.3、中文分词示例

中文分词的效果,其对于连接在一起的中文语句不做任何切分,完整输出,该分词器无法应对中文分词。

GET _analyze

{"analyzer": "simple","text" : "北京市"

}# 分词后的效果:

{"tokens" : [{"token" : "北京市","start_offset" : 0,"end_offset" : 3,"type" : "word","position" : 0}]

}

4.3、Whitespace Analyzer

它非常简单,根据名称也可以看出是按照空格进行切分的。

- 使用空白字符进行分词;

- 切分后不做大小写处理;

- 保留StopWords。

4.3.1、英文分词

可以看出,只是按照空格进行切分,2022 数字还是在的,Java 的首字母还是大写的,in 还是保留的。

GET _analyze

{"analyzer": "whitespace","text": "In 2022, Java is the best language in the world."

}结果:

{"tokens" : [{"token" : "In","start_offset" : 0,"end_offset" : 2,"type" : "word","position" : 0},{"token" : "2022,","start_offset" : 3,"end_offset" : 8,"type" : "word","position" : 1},{"token" : "Java","start_offset" : 9,"end_offset" : 13,"type" : "word","position" : 2},{"token" : "is","start_offset" : 14,"end_offset" : 16,"type" : "word","position" : 3},{"token" : "the","start_offset" : 17,"end_offset" : 20,"type" : "word","position" : 4},{"token" : "best","start_offset" : 21,"end_offset" : 25,"type" : "word","position" : 5},{"token" : "language","start_offset" : 26,"end_offset" : 34,"type" : "word","position" : 6},{"token" : "in","start_offset" : 35,"end_offset" : 37,"type" : "word","position" : 7},{"token" : "the","start_offset" : 38,"end_offset" : 41,"type" : "word","position" : 8},{"token" : "world.","start_offset" : 42,"end_offset" : 48,"type" : "word","position" : 9}]

}

4.3.2、中文分词

中文分词的效果,依然只会针对空白字符进行分词,无空白字符的串会被完整输出,该分词器无法应对中文分词。

GET _analyze

{"analyzer": "whitespace","text" : "北京市"

}结果:

{"tokens" : [{"token" : "北京市","start_offset" : 0,"end_offset" : 3,"type" : "word","position" : 0}]

}

4.4、Stop Analyzer

- 使用非英文字母进行分词

- 分词后,非英文字母被删除

- 切分后全部转换为小写

- 删除StopWords

4.4.1、英文分词示例

相较于刚才提到的 Simple Analyzer:删除了非英文字母,多了 stop 过滤,stop 就是会把 the,a,is 等修饰词去除。

GET _analyze

{"analyzer": "stop","text": "In 2022, Java is the best language in the world."

}结果:

{"tokens" : [{"token" : "java","start_offset" : 9,"end_offset" : 13,"type" : "word","position" : 1},{"token" : "best","start_offset" : 21,"end_offset" : 25,"type" : "word","position" : 4},{"token" : "language","start_offset" : 26,"end_offset" : 34,"type" : "word","position" : 5},{"token" : "world","start_offset" : 42,"end_offset" : 47,"type" : "word","position" : 8}]

}

4.4.2、中文分词示例

中文分词的效果,其对于连接在一起的中文语句不做任何切分,完整输出,该分词器无法应对中文分词。

GET _analyze

{"analyzer": "stop","text" : "北京市"

}# 結果:

{"tokens" : [{"token" : "北京市","start_offset" : 0,"end_offset" : 3,"type" : "word","position" : 0}]

}

4.5、Keyword Analyzer

它其实不做分词处理,只是将输入作为 Term 输出。

4.6、Pattern Analyzer

它可以通过正则表达式的方式进行分词,默认是用 \W+ 进行分割的,也就是非字母进行切分的,由于运行结果和 Stamdard Analyzer 一样,就不展示了。

4.7、Language Analyzer

ES 为不同国家语言的输入提供了 Language Analyzer 分词器,在里面可以指定不同的语言,

我们用 english 进行分词看下:

可以看出 language 被改成了 languag,同时它也是有 stop 过滤器的,比如 in,is 等词也被去除了。

GET _analyze

{"analyzer": "english","text": "In 2022, Java is the best language in the world."

}结果:

{"tokens" : [{"token" : "2022","start_offset" : 3,"end_offset" : 7,"type" : "","position" : 1},{"token" : "java","start_offset" : 9,"end_offset" : 13,"type" : "","position" : 2},{"token" : "best","start_offset" : 21,"end_offset" : 25,"type" : "","position" : 5},{"token" : "languag","start_offset" : 26,"end_offset" : 34,"type" : "","position" : 6},{"token" : "world","start_offset" : 42,"end_offset" : 47,"type" : "","position" : 9}]

}

本文来自互联网用户投稿,文章观点仅代表作者本人,不代表本站立场,不承担相关法律责任。如若转载,请注明出处。 如若内容造成侵权/违法违规/事实不符,请点击【内容举报】进行投诉反馈!