k8s使用ceph存储

文章目录

- 初始化操作

- k8s使用ceph rbd

- volume

- PV

- 静态pv

- 动态pv

- k8s使用cephfs

- volume

- 静态pv

初始化操作

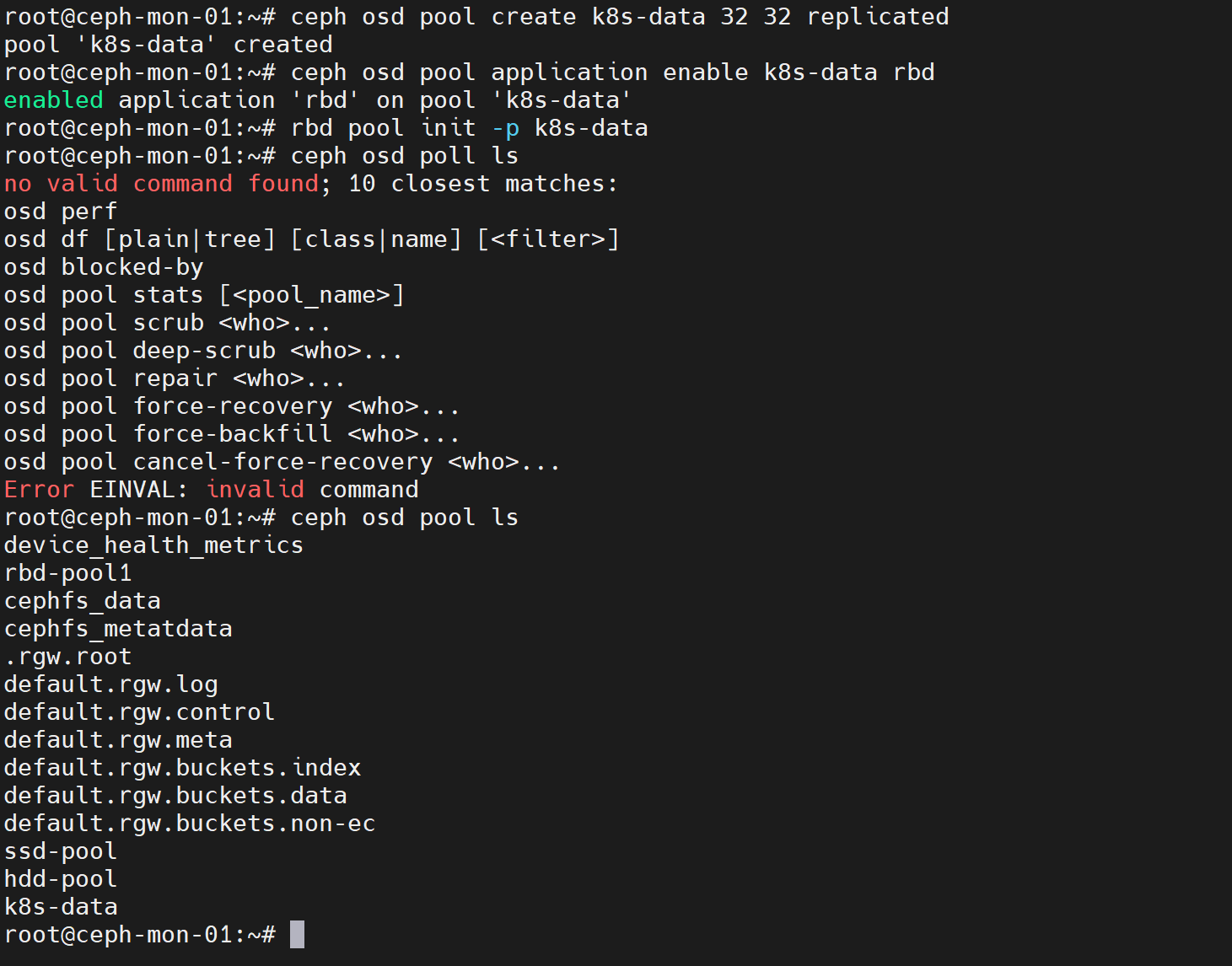

ceph创建rbd存储池

ceph osd pool create k8s-data 32 32 replicated

ceph osd pool application enable k8s-data rbd

rbd pool init -p k8s-data

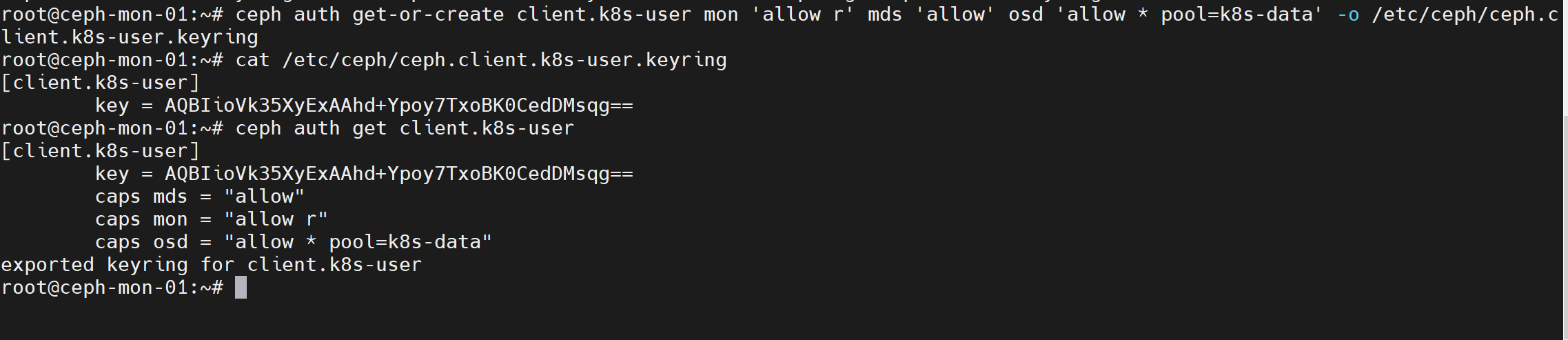

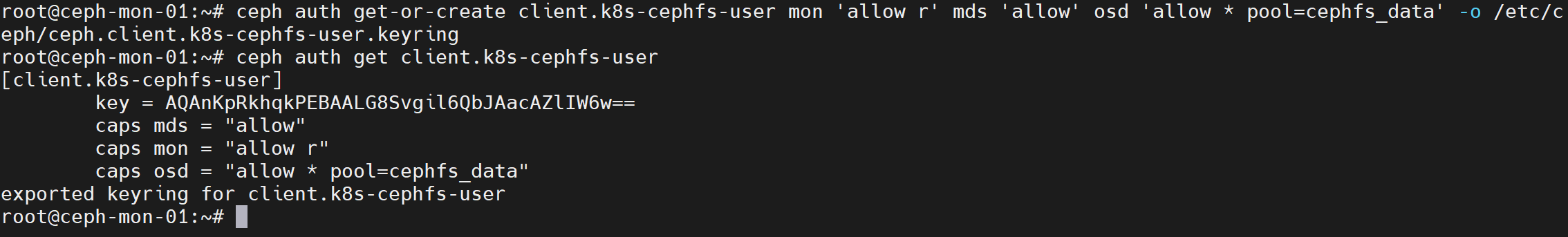

ceph添加授权,需要创建两个用户,一个挂载rbd时使用,另一个挂载cephfs时使用

ceph auth get-or-create client.k8s-user mon 'allow r' mds 'allow' osd 'allow * pool=k8s-data' -o /etc/ceph/ceph.client.k8s-user.keyringceph auth get-or-create client.k8s-cephfs-user mon 'allow r' mds 'allow' osd 'allow * pool=cephfs_data' -o /etc/ceph/ceph.client.k8s-cephfs-user.keyring

k8s集群中所有节点安装ceph-common

apt -y install ceph-common

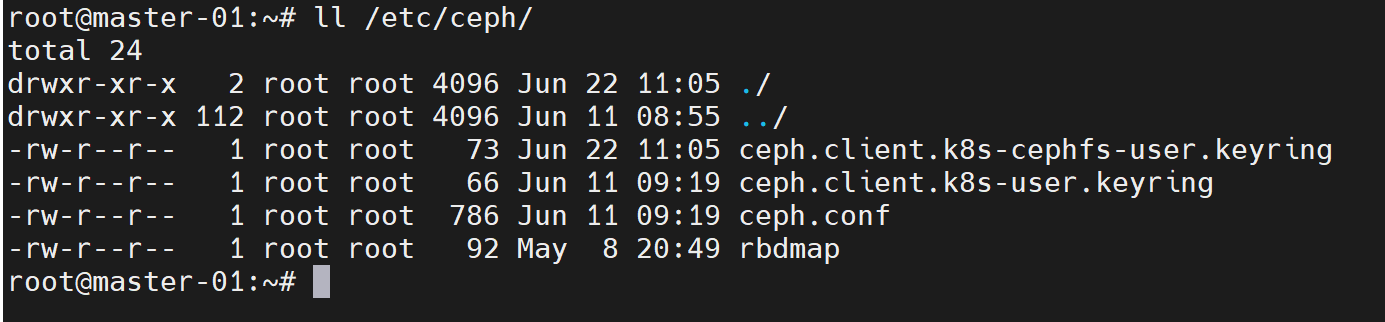

将ceph配置文件和keyring文件拷贝至所有k8s集群中节点

scp /etc/ceph/ceph.conf /etc/ceph/ceph.client.k8s-user.keyring /etc/ceph/ceph.client.k8s-cephfs-user.keyring root@xxx:/etc/ceph/

k8s使用ceph rbd

k8s集群中的pod使用rbd时可以直接通过pod的volume进行挂载,也可以以pv形式挂载使用

volume

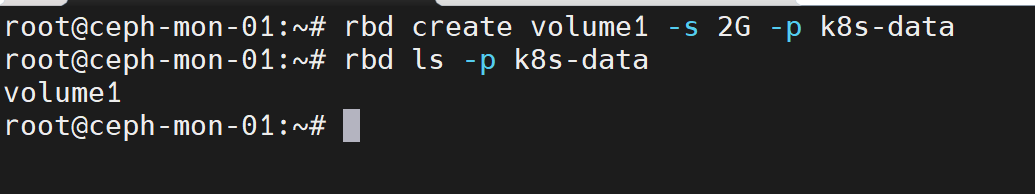

提前在存储池中创建image

rbd create volume1 -s 2G -p k8s-data

配置pod通过volume挂载volume1

apiVersion: v1

kind: Pod

metadata:name: pod-with-rbd-volume

spec:containers:- name: busyboximage: busyboximagePullPolicy: IfNotPresentcommand: ["/bin/sh", "-c", "sleep 3600"]volumeMounts:- name: data-volumemountPath: /data/volumes:- name: data-volumerbd:monitors: ["192.168.211.23:6789", "192.168.211.24:6789", "192.168.211.25:6789"] #指定mon节点地址pool: k8s-data #指定poolimage: volume1 #指定要挂载的rbd imageuser: k8s-user #指定挂载rbd image时使用的用户,默认admin用户keyring: /etc/ceph/ceph.client.k8s-user.keyring #用户的keyring文件路径fsType: xfs #指定挂载的rbd image格式化的文件系统类型,默认ext4

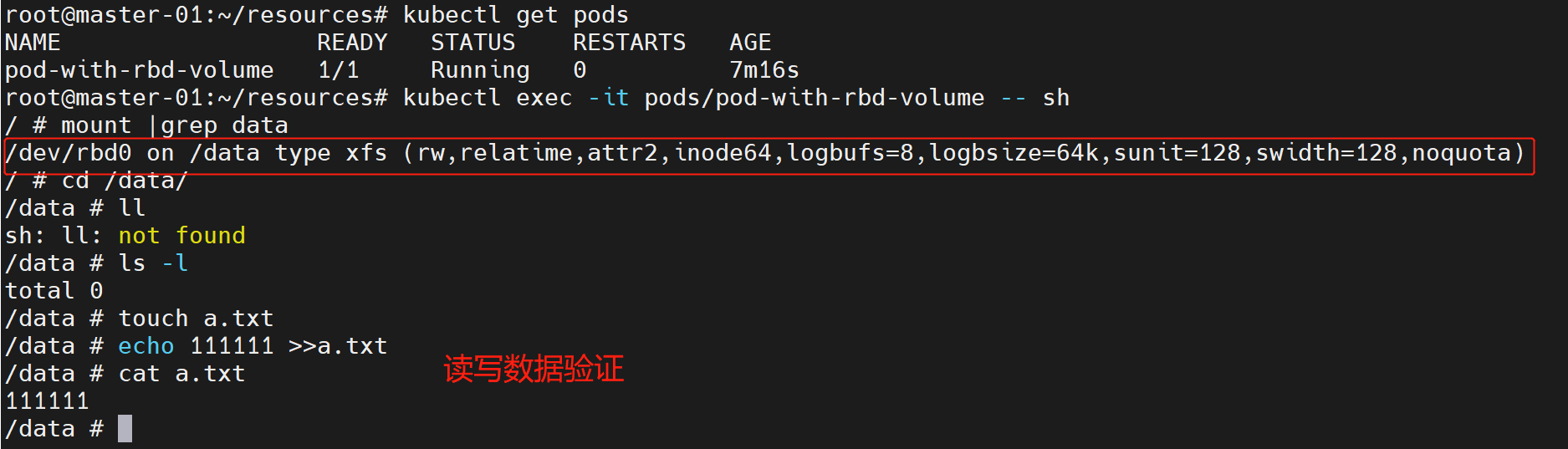

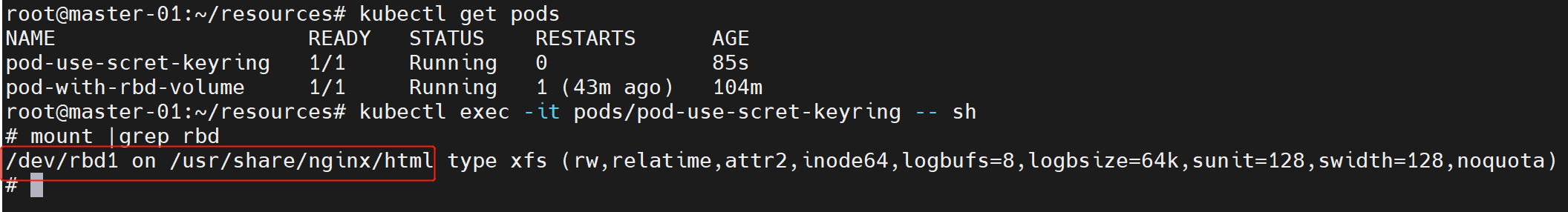

创建pod到集群中,等待pod就绪后可以进入pod验证

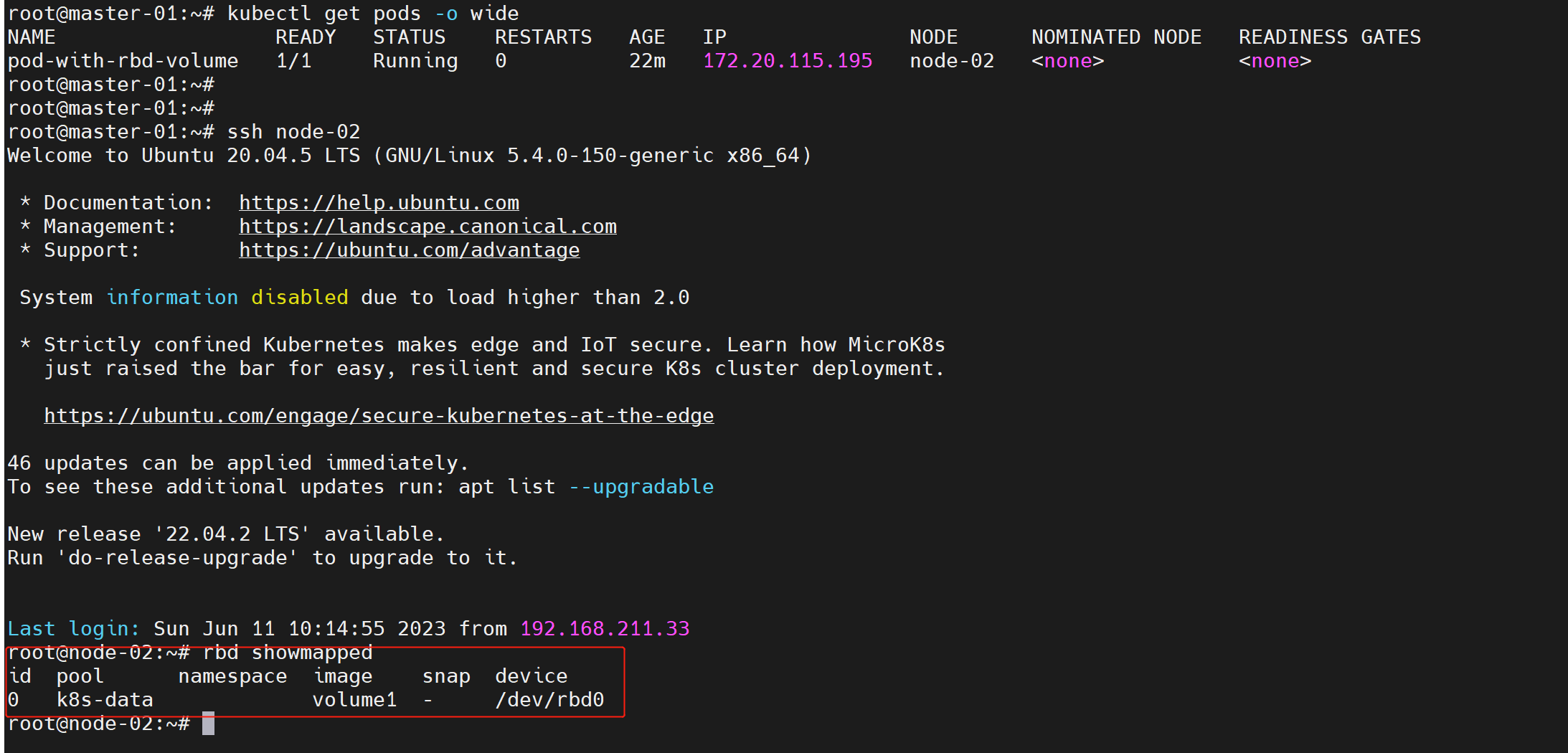

由于pod使用的的是宿主机内核,所以rbd image实际是在宿主机挂载的

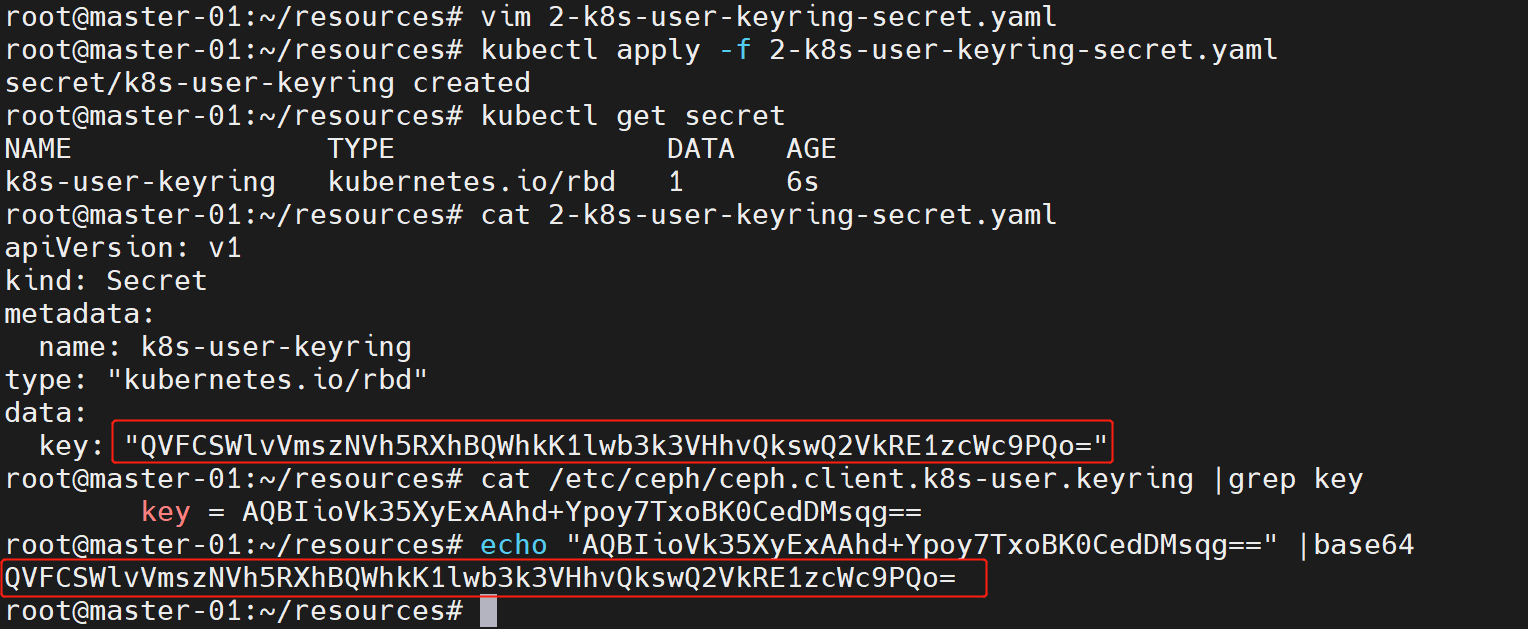

另外keyring文件也可以保存在secret中,在pod中通过secret来引用keyring文件。

例如将k8s-user的keyring保存到secret中(注意,需要对用户的key先进行base64编码):

apiVersion: v1

kind: Secret

metadata:name: k8s-user-keyring

type: "kubernetes.io/rbd" #类型必须是这个

data:key: "QVFCSWlvVmszNVh5RXhBQWhkK1lwb3k3VHhvQkswQ2VkRE1zcWc9PQo="

将pod创建到集群中,等待pod就绪后进入pod验证

配置pod通过secret引用keyring文件

apiVersion: v1

kind: Pod

metadata:name: pod-use-scret-keyring

spec:containers:- name: nginximage: nginximagePullPolicy: IfNotPresentvolumeMounts:- name: webmountPath: /usr/share/nginx/html/volumes:- name: webrbd:monitors: ["192.168.211.23:6789", "192.168.211.24:6789", "192.168.211.25:6789"]pool: k8s-dataimage: volume2user: k8s-usersecretRef: #通过secret引用keyringname: k8s-user-keyringfsType: xfs

PV

静态pv

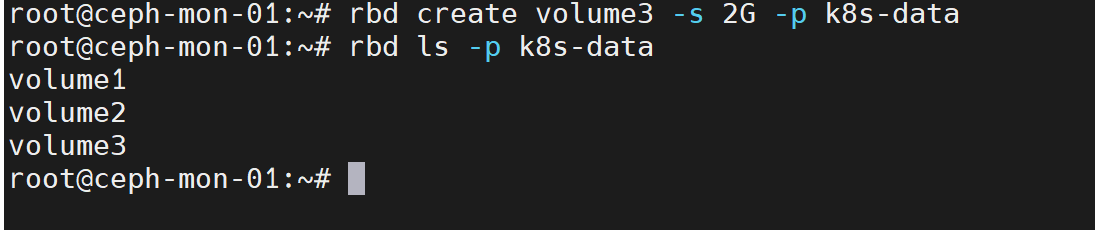

静态pv的方式也需要提前在存储池中创建好image

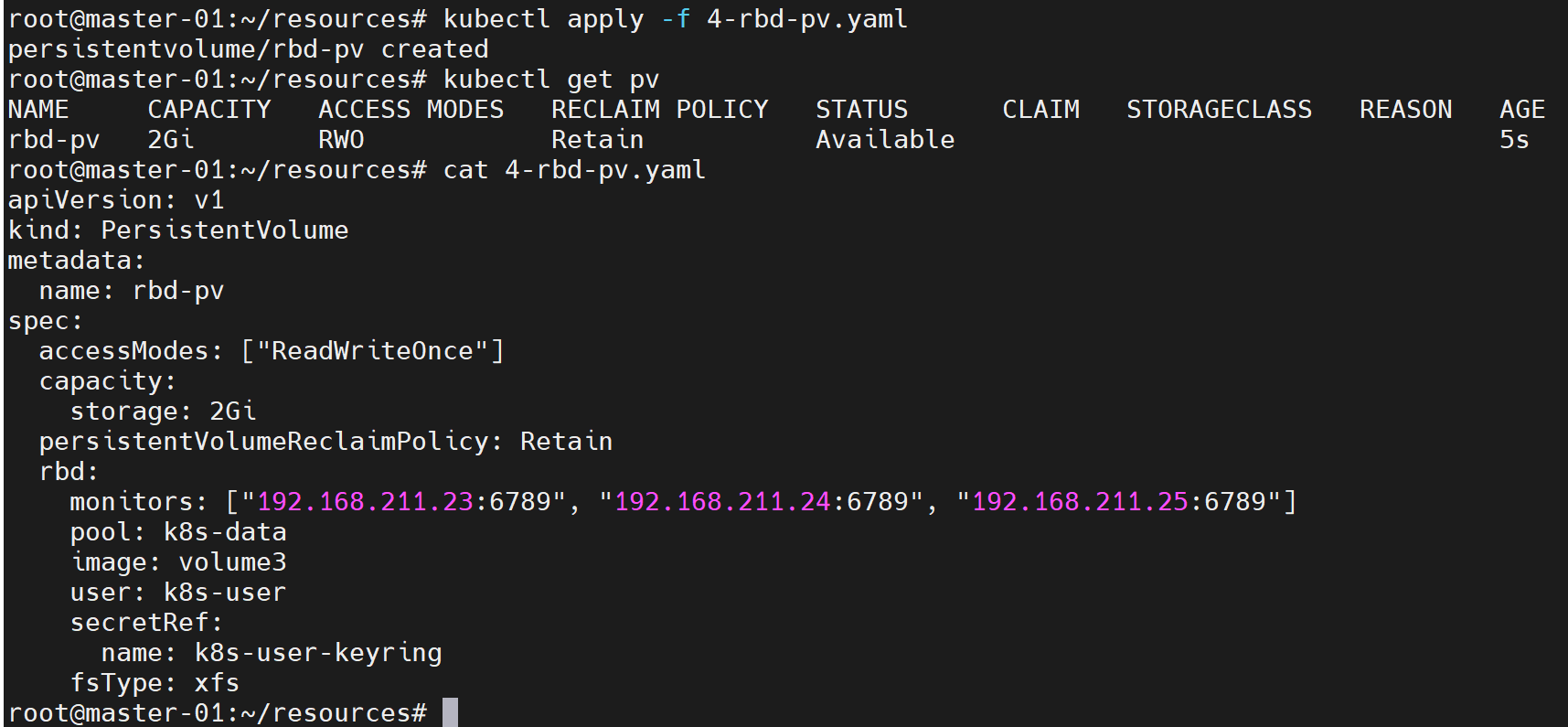

创建pv

apiVersion: v1

kind: PersistentVolume

metadata:name: rbd-pv

spec:accessModes: ["ReadWriteOnce"]capacity:storage: 2GipersistentVolumeReclaimPolicy: Retainrbd:monitors: ["192.168.211.23:6789", "192.168.211.24:6789", "192.168.211.25:6789"]pool: k8s-dataimage: volume3user: k8s-usersecretRef:name: k8s-user-keyringfsType: xfs

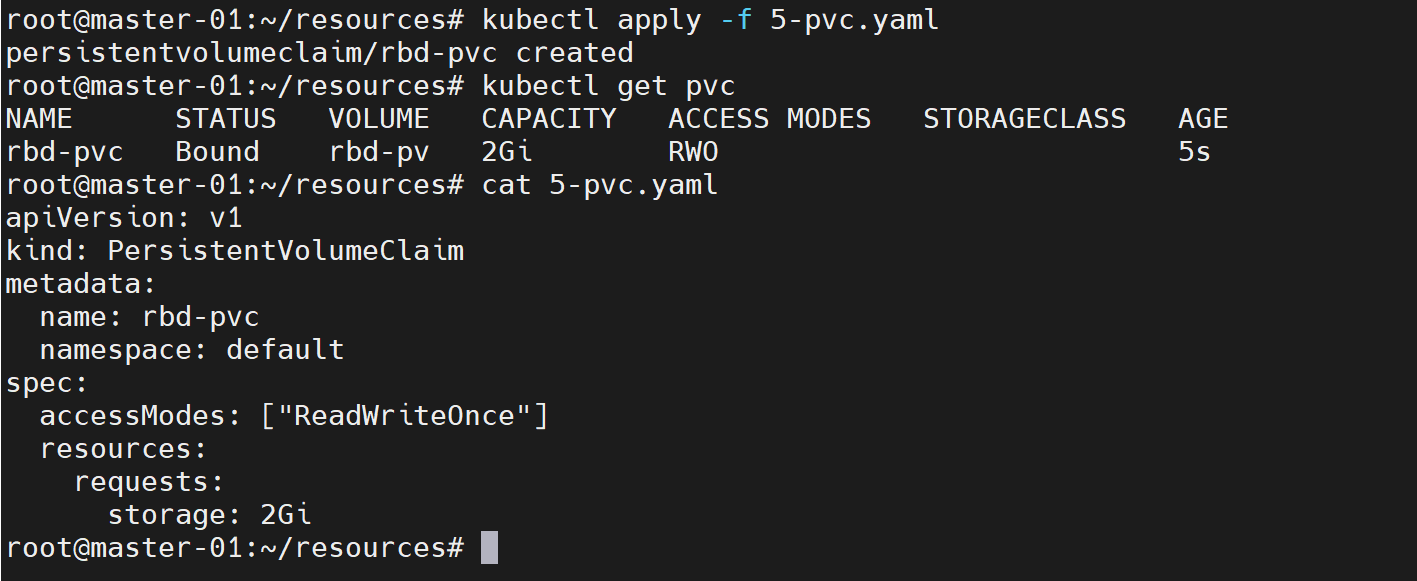

创建pvc

apiVersion: v1

kind: PersistentVolumeClaim

metadata:name: rbd-pvcnamespace: default

spec:accessModes: ["ReadWriteOnce"]resources:requests:storage: 2Gi

pod使用pvc

apiVersion: v1

kind: Pod

metadata:name: pod-use-rbd-pvc

spec:containers:- name: nginximage: nginximagePullPolicy: IfNotPresentvolumeMounts:- name: webmountPath: /usr/share/nginx/html/volumes:- name: webpersistentVolumeClaim:claimName: rbd-pvcreadOnly: false

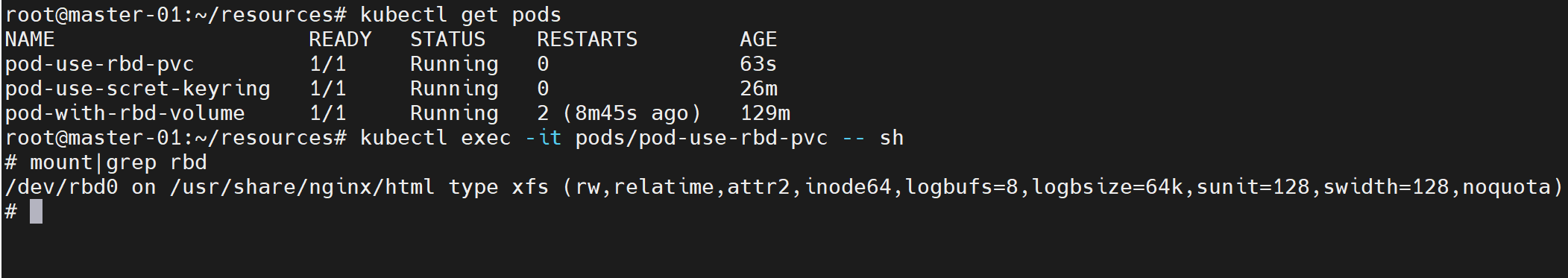

将pod创建到集群中,等待pod就绪后进入pod验证

动态pv

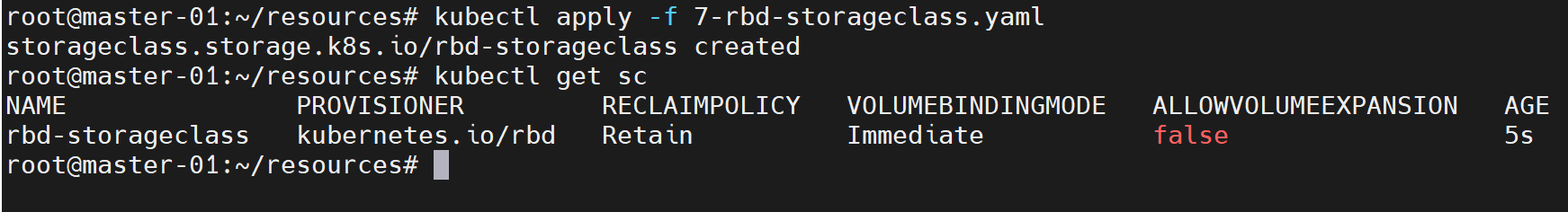

创建存储类

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:name: rbd-storageclass

provisioner: kubernetes.io/rbd

reclaimPolicy: Retain

parameters:monitors: 192.168.211.23:6789,192.168.211.24:6789,192.168.211.25:6789 #mon节点地址adminId: k8s-user #用户名称,这个用户是用于在pool中创建image时使用adminSecretName: k8s-user-keyring #用户keyring对应的secretadminSecretNamespace: default #用户keyring对应的secret所在的名称空间pool: k8s-data #存储池userId: k8s-user #这个用户是用于挂载rbd image时使用userSecretName: k8s-user-keyringuserSecretNamespace: defaultfsType: xfsimageFormat: "2" #创建的rbd image的格式imageFeatures: "layering" #创建的rbd image启用的特性

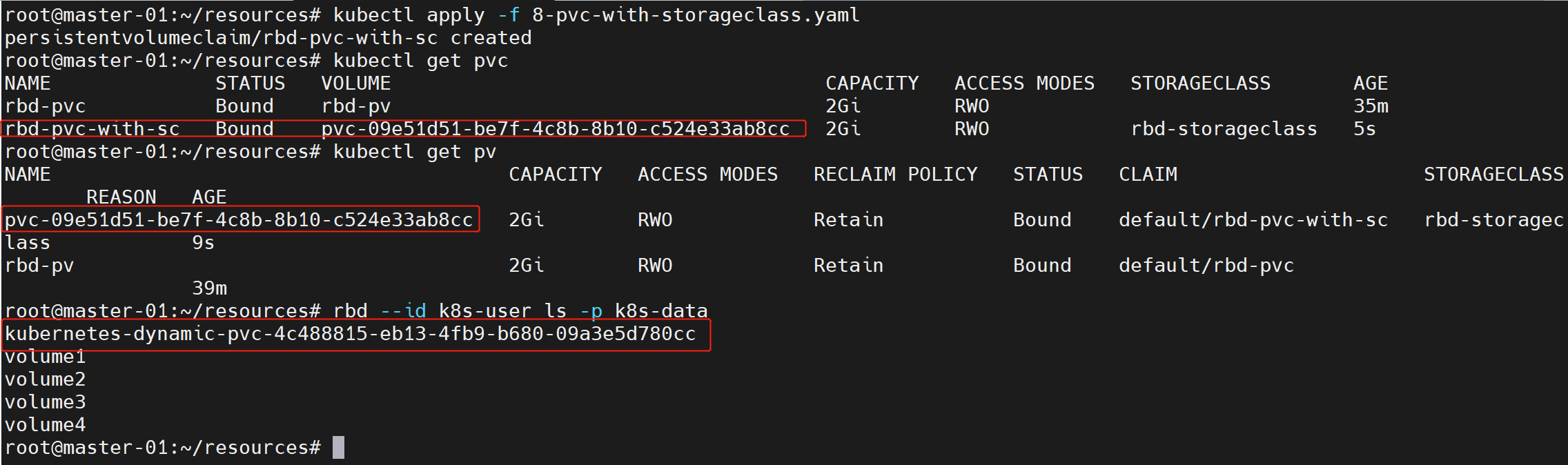

创建pvc

apiVersion: v1

kind: PersistentVolumeClaim

metadata:name: rbd-pvc-with-scnamespace: default

spec:accessModes: ["ReadWriteOnce"]resources:requests:storage: 2GistorageClassName: rbd-storageclass

pod使用动态pvc

apiVersion: v1

kind: Pod

metadata:name: pod-use-rdynamic-pvc

spec:containers:- name: redisimage: redisimagePullPolicy: IfNotPresentvolumeMounts:- name: datamountPath: /data/redis/volumes:- name: datapersistentVolumeClaim:claimName: rbd-pvc-with-screadOnly: false

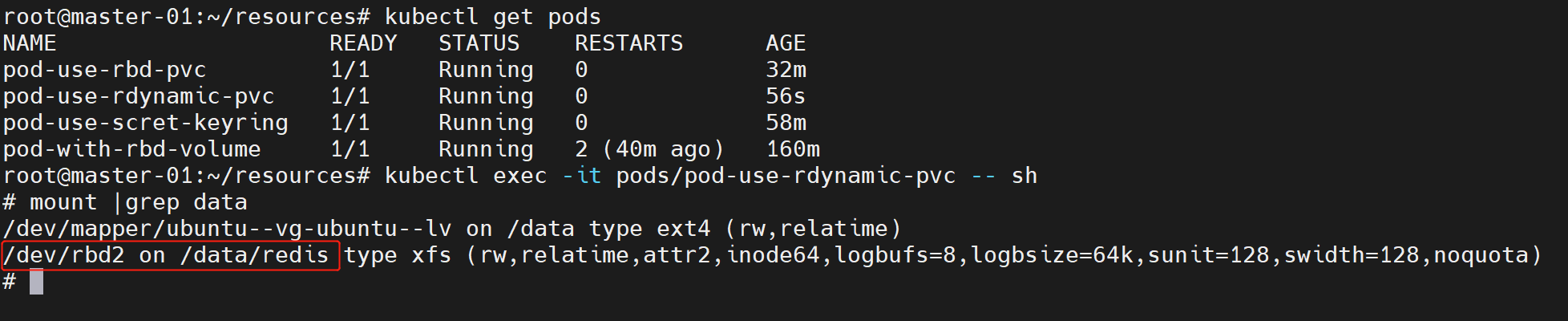

将pod创建到集群中,等待pod就绪后进入pod验证

k8s使用cephfs

cephfs可以同时挂载给多个pod使用,实现数据共享

volume

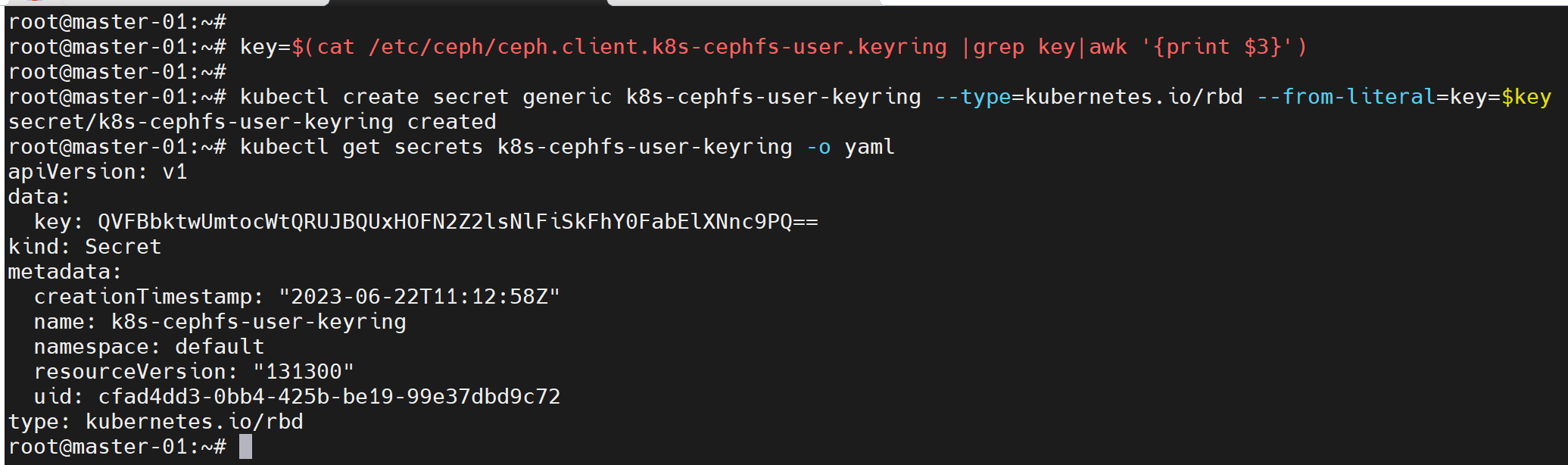

先将前面创建的ceph用户client.k8s-cephfs-user的keyring保存到secret中

key=$(cat /etc/ceph/ceph.client.k8s-cephfs-user.keyring |grep key|awk '{print $3}')

kubectl create secret generic k8s-cephfs-user-keyring --type=kubernetes.io/rbd --from-literal=key=$key

配置pod挂载cephfs

apiVersion: apps/v1

kind: Deployment

metadata:name: nginx

spec:replicas: 3selector:matchLabels:app: nginxtemplate:metadata:labels:app: nginxspec:containers:- name: nginximage: nginximagePullPolicy: IfNotPresentvolumeMounts:- name: web-datamountPath: /usr/share/nginx/htmlvolumes:- name: web-datacephfs:monitors:- 192.168.211.23:6789- 192.168.211.24:6789- 192.168.211.25:6789path: /user: k8s-cephfs-usersecretRef:name: k8s-cephfs-user-keyring

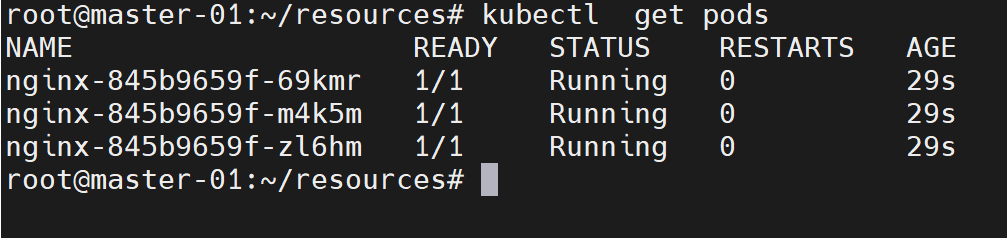

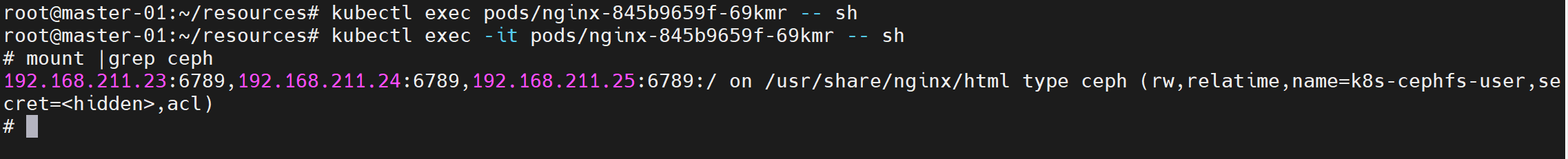

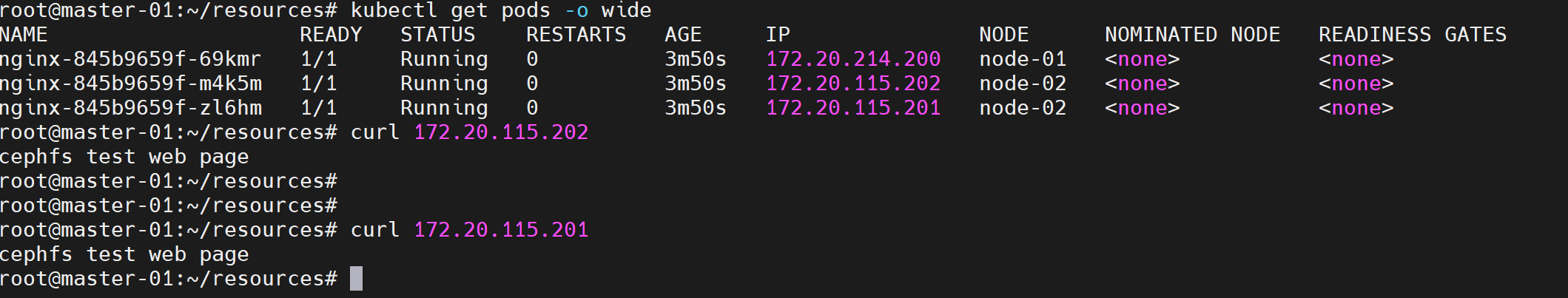

在pod中验证挂载

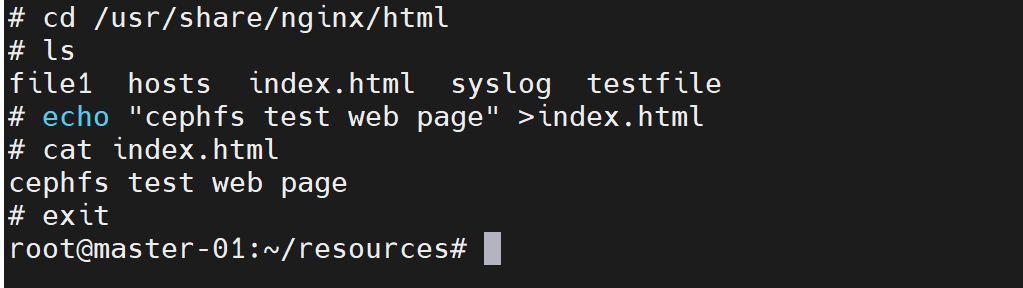

在其中一个pod写入测试页面,从其他的pod访问测试页面验证数据共享

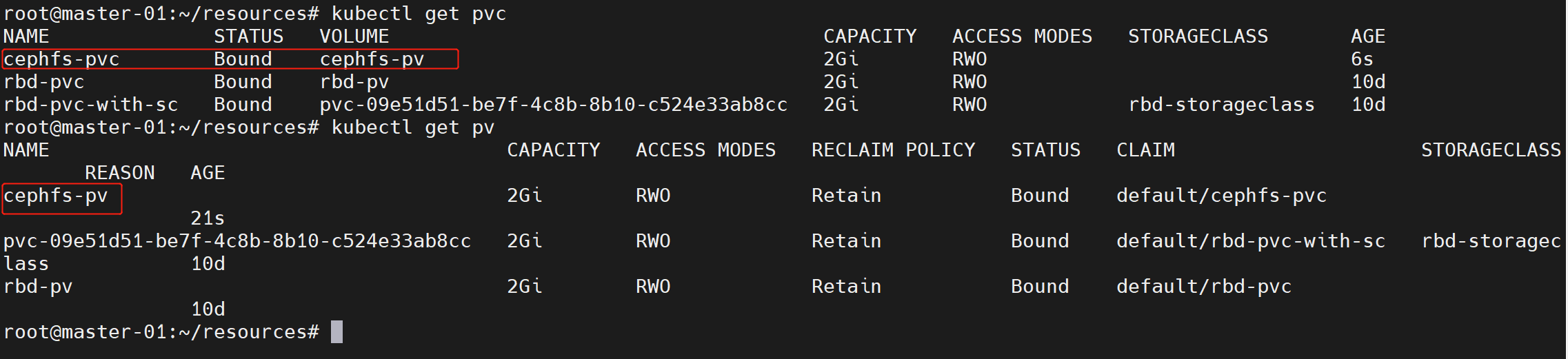

静态pv

创建cephfs pv和pvc

apiVersion: v1

kind: PersistentVolume

metadata:name: cephfs-pv

spec:accessModes: ["ReadWriteOnce"]capacity:storage: 2GipersistentVolumeReclaimPolicy: Retaincephfs:monitors: ["192.168.211.23:6789", "192.168.211.24:6789", "192.168.211.25:6789"]path: /user: k8s-cephfs-usersecretRef:name: k8s-cephfs-user---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:name: cephfs-pvcnamespace: default

spec:accessModes: ["ReadWriteOnce"]resources:requests:storage: 2Gi

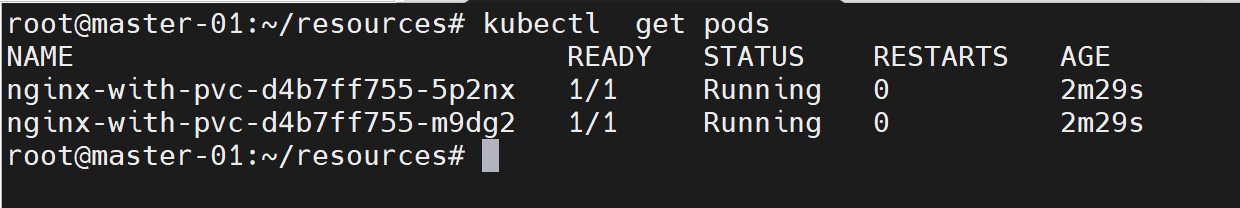

配置pod使用cephfs-pv

apiVersion: apps/v1

kind: Deployment

metadata:name: nginx-with-pvc

spec:replicas: 2selector:matchLabels:app: nginxstrage: cephfs-pvctemplate:metadata:labels:app: nginxstrage: cephfs-pvcspec:containers:- name: nginximage: nginximagePullPolicy: IfNotPresentvolumeMounts:- name: webmountPath: /usr/share/nginx/html/volumes:- name: webpersistentVolumeClaim:claimName: cephfs-pvcreadOnly: false

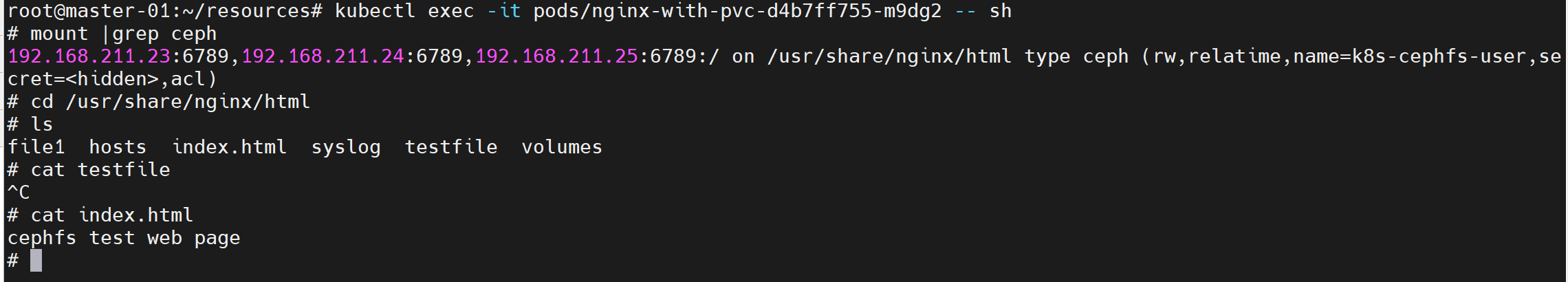

进入pod验证

目前k8s内置的cephfs存储插件还不能实现动态pv,可以通过ceph官方的ceph-csi插件来实现cephfs的动态pv功能:https://github.com/ceph/ceph-csi

本文来自互联网用户投稿,文章观点仅代表作者本人,不代表本站立场,不承担相关法律责任。如若转载,请注明出处。 如若内容造成侵权/违法违规/事实不符,请点击【内容举报】进行投诉反馈!